It installs separately and has its own user preferences, and it can run alongside the release version. And they gain the benefits of revamped Character Animator organization tools, including the ability to filter the timeline to focus on individual puppets, scenes, audio, or keyframes.Ĭharacter Animator 3.4 beta is available for download via the Creative Cloud desktop application and includes an in-app library of starter puppets and tutorials. Merge Takes lets them combine multiple Lip Sync or Trigger takes into a single row.

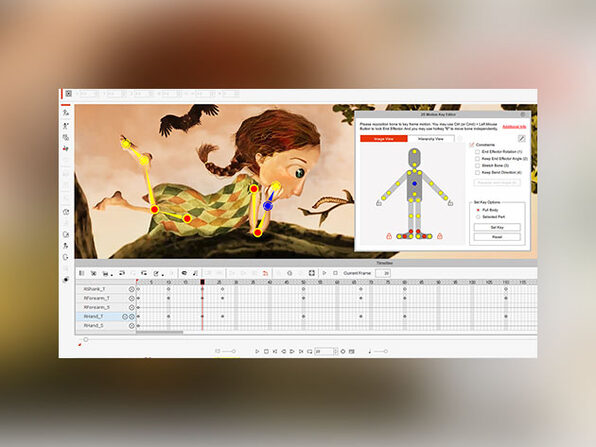

Using the new Set Rest Pose option, users can animate back to the default position when they recalibrate, so they can use it during a live performance without causing their character to jump abruptly. Pin Feet When Standing complements Limb IK it’s a new option that keeps characters’ feet grounded when they’re not walking, leading to more realistic mid-body poses, like squats. It controls the bend directions and stretching of legs as well as arms, allowing artists to pin hands in place while moving the rest of the body. Limb IK, previously Arm IK, is in tow with the new Character Animator. The latest release of Character Animator’s lip-sync engine - Lip Sync - improves automatic lip-syncing and the timing of mouth shapes called “visemes.” Both viseme detection and audio-based muting settings can be adjusted via the settings menu, where users can also fall back to an earlier engine iteration. And stop-motion animation studios like Laika are employing AI to automatically remove seam lines in frames. Pixar is experimenting with AI and general adversarial networks to produce high-resolution animation content, while Disney recently detailed in a technical paper a system that creates storyboard animations from scripts. All the visemes data is copied to the system clipboard as Time Remap keyframe data for use in After Effects. In the Timeline panel, right-click a Lip Sync take > Copy Visemes for After Effects.

Several - including Speech-Aware Animation and Lip Sync - are powered by Sensei, Adobe’s cross-platform machine learning technology, and leverage algorithms to generate animation from recorded speech and align mouth movements for speaking parts.ĪI is becoming increasingly central to film and television production, particularly as the pandemic necessitates resource-constrained remote work arrangements. Take Character Animator visemes into After Effects for use on different characters by following these steps. Learn MoreĪdobe today announced the beta launch of new features for Adobe Character Animator (version 3.4), its desktop software that combines live motion-capture with a recording system to control 2D puppets drawn in Photoshop or Illustrator. Join top executives in San Francisco on July 11-12, to hear how leaders are integrating and optimizing AI investments for success. Professionals have been animating faces by hand since even before Disney came out with their first cartoon classic in 1937, Snow White and the Seven Dwarfs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed